Section 1: The Problem

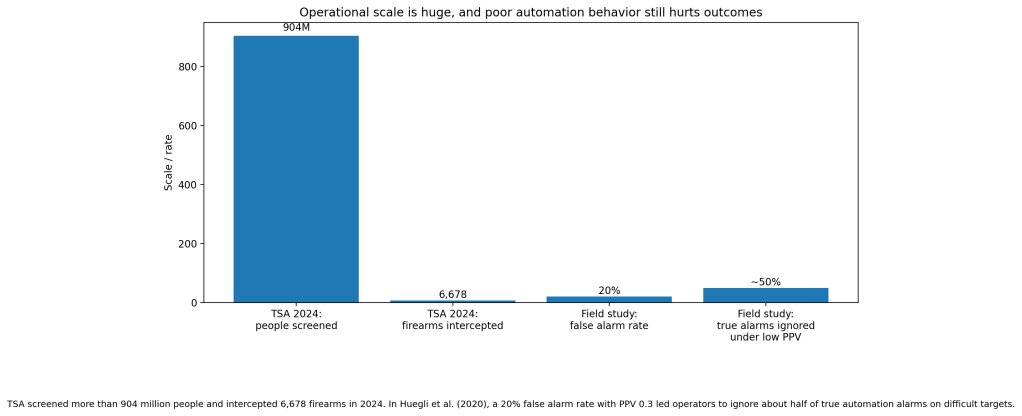

Airport security runs at huge scale. In 2024, TSA screened more than 904 million people at U.S. checkpoints and intercepted 6,678 firearms in carry-on bags, or 7.4 firearms per million passengers screened. That is a reminder of how much pressure sits on checkpoint systems every day, even before you get to harder threats like explosives and improvised devices.

The weak link is still visual inspection under clutter. Human screeners must read dense X-ray images quickly, while bags contain overlapping electronics, liquids, clothing, and metal objects. A major 2022 review of X-ray security imaging found that clutter, occlusion, limited training data, and operator fatigue all hurt manual performance, especially when dangerous items are partly hidden or visually similar to harmless ones.

That is why this domain fits data science so well. Modern computer vision systems do not only classify whole images. They detect, localize, rank, and flag suspicious regions in real time. The goal is not to replace officers. It is to reduce misses, cut unnecessary bag searches, and help checkpoints handle growing passenger volume without letting detection quality slip.

Section 2: What Research Shows

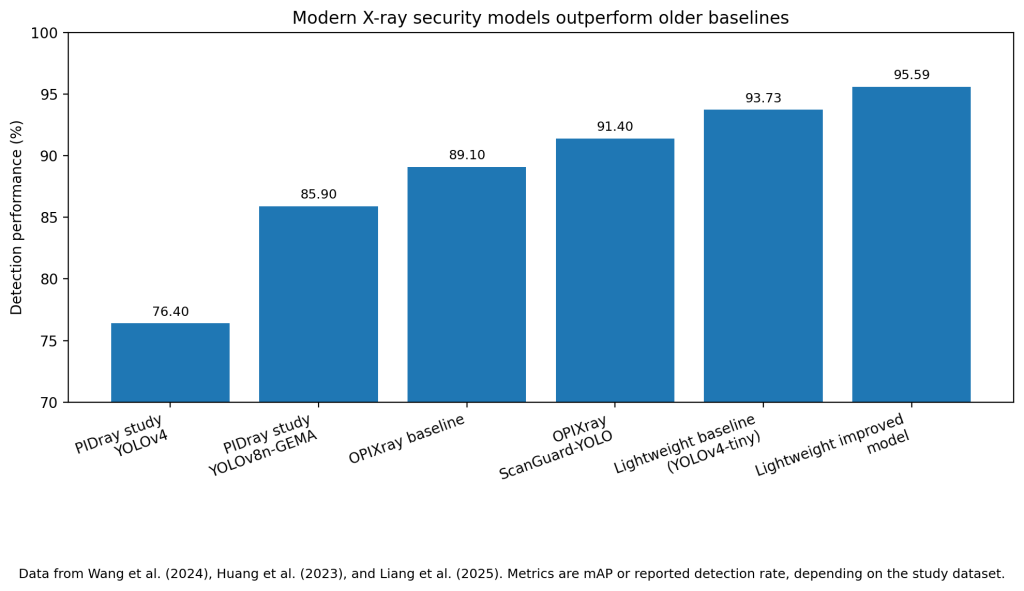

The retrospective model results are strong. On the PIDray dataset, Wang et al. reported that an improved YOLOv8n-GEMA model reached 85.9% mAP, beating YOLOv4 by 9.5 points, YOLOv5s by 2.3, YOLOv7 by 2.7, and YOLOv8n by 4.1. In the same paper, the baseline YOLOv8n result on PIDray was 81.8% mAP, which shows a clear jump from an already competitive detector.

Other studies show the same pattern. Huang et al. reported that ScanGuard-YOLO improved recall by 4.5 percentage points, mAP@0.5 by 2.3 points, and F1 by 2.3 points over its baseline on OPIXray. The baseline mAP@0.5 was 0.891, while the full improved model reached 0.914. Liang et al. reported a lightweight X-ray model with a top detection rate of 95.59%, which was a 1.86-point gain over the YOLOv4-tiny baseline while still running at 122 FPS.

The broader literature has caught up to this shift. Akcay and Breckon’s review examined about 130 relevant papers in X-ray security imaging and found that deep learning had moved past older handcrafted-feature pipelines in classification, detection, segmentation, and anomaly detection. The review also points out the big catch: benchmark gains do not erase the field’s hardest problems, especially limited real-world data, heavy occlusion, and domain shift from lab datasets to live checkpoint images.

Section 3: What the Real World Shows

This field has less published airport-wide rollout data than healthcare or logistics, but it does have strong prospective human-in-the-loop evidence.

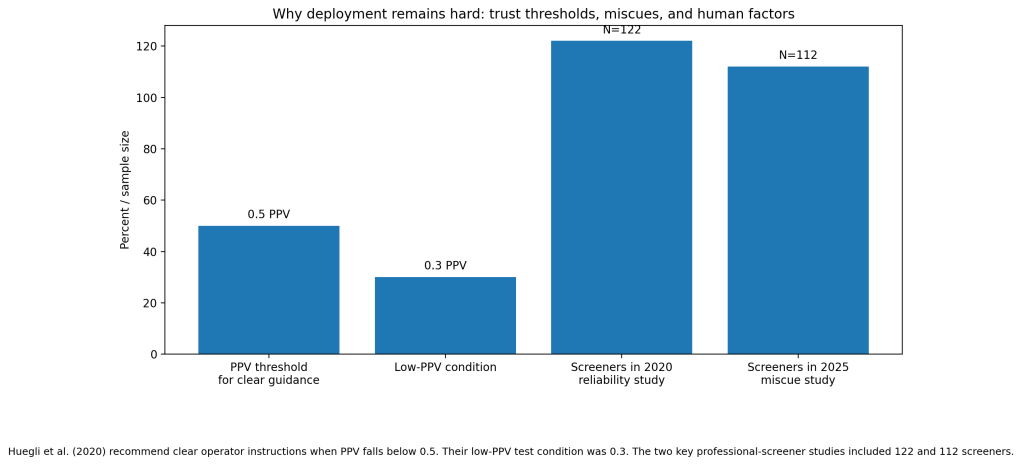

In a 2020 study, Huegli, Merks, and Schwaninger tested 122 professional airport security screeners using realistic X-ray images while varying automation reliability in an explosives detection system for cabin baggage screening. When unaided human performance was lower, automation with higher d-prime produced large detection benefits. But the details mattered. In a low-PPV condition, where the system had a 20% false alarm rate and PPV of 0.3, officers ignored about half of the true automation alarms on difficult targets. That is a real operational result, not a benchmark score.

An earlier Applied Ergonomics study by Hättenschwiler et al. tested screeners at two international airports across three modes: no automation, on-screen alarm resolution, and automated decision. Automated decision produced better human-machine detection performance than on-screen alarm resolution and no automation, though it also raised false alarms slightly. The authors concluded that wider implementation would increase explosive detection in passenger bags and that automated decision should be favored over weaker decision-support designs.

A newer 2025 study pushed the warning further. Huegli et al. tested 112 professional screeners and found that when the system gave miscues, meaning it highlighted the wrong location while a non-explosive threat was elsewhere in the bag, officers missed more knives. The lesson is blunt: even good automation can make checkpoint performance worse if the signal appears trustworthy but points attention to the wrong place.

Section 4: The Implementation Gap

The first barrier is false alarms. The 2020 screener study showed a cry-wolf effect: a 20% false alarm rate with PPV 0.3 caused officers to ignore about half of true alarms on difficult targets. That is not a minor usability issue. It means a detector that looks acceptable on paper can underperform in practice because humans stop trusting it.

The second barrier is miscuing. The 2025 study showed that miscues pulled attention to the wrong place and increased missed knives. In checkpoint work, location matters as much as classification. A model that says “something is wrong” but highlights the wrong region can damage performance rather than support it.

The third barrier is data realism. The 2022 review identified lack of large real-world datasets as a core bottleneck. X-ray bags are not like ordinary photos. Objects overlap, density varies by material, and many threat classes are rare by design. That makes training data sparse and deployment brittle. Many models look excellent on curated datasets and then struggle with live baggage complexity.

The fourth barrier is certification, procurement, and workflow redesign. DHS describes modern CT scanners enhanced with AI as the backbone of explosive detection, and TSA has already installed hundreds of CT units, including about 634 units by April 2023 under a major procurement push. But hardware rollouts move through long regulatory, procurement, and checkpoint redesign cycles. That slows adoption even when the model side is improving fast.

Section 5: Where It Actually Works

It works best when the tool is tightly defined and the human role is clear. Hättenschwiler’s study found that automated decision outperformed weaker assistive modes, which suggests that half-automation is not always the sweet spot. Huegli’s work adds the missing condition: if a system’s PPV drops too low or its cues are unreliable, the checkpoint needs explicit operator rules, not vague “use your judgment” prompts.

The pattern is simple. Airports get value when the model is reliable enough to earn trust, the interface points to the right place, and operators know exactly how to respond when confidence is low.

Section 6: The Opportunity

Airport screening does not need bigger claims about AI. It needs higher-PPV systems, better cue design, and more checkpoint-grade validation. The research says vision models can beat older baselines. The field studies say the bigger problem is human-machine design.

Takeaways:

- Optimize for PPV and alarm quality, not headline accuracy alone.

- Test miscues and false alarms before deployment, not after rollout.

- Train on real checkpoint imagery with heavy clutter and occlusion.

- Use clear operator rules when PPV falls below 0.5.

- Judge deployment by missed-threat rates and bag-search burden, not benchmark mAP alone.

Charts:

References

[1] Akcay, Samet, and Toby Breckon. “Towards Automatic Threat Detection: A Survey of Advances of Deep Learning within X-Ray Security Imaging.” Pattern Recognition, vol. 122, 2022, 108245.

[2] Hättenschwiler, Nicole, Yanik Sterchi, Marcia Mendes, and Adrian Schwaninger. “Automation in Airport Security X-Ray Screening of Cabin Baggage: Examining Benefits and Possible Implementations of Automated Explosives Detection.” Applied Ergonomics, vol. 72, 2018, pp. 58–68.

[3] Huegli, David, Sarah Merks, and Adrian Schwaninger. “Automation Reliability, Human-Machine System Performance, and Operator Compliance: A Study with Airport Security Screeners Supported by Automated Explosives Detection Systems for Cabin Baggage Screening.” Applied Ergonomics, vol. 87, 2020, 103094.

[4] Huegli, David, Alain Chavaillaz, Juergen Sauer, and Adrian Schwaninger. “Effects of False Alarms and Miscues of Decision Support Systems on Human-Machine System Performance: A Study with Airport Security Screeners.” Ergonomics, vol. 68, no. 12, 2025, pp. 2088–2103.

[5] Huang, Xinyu, et al. “ScanGuard-YOLO: Enhancing X-ray Prohibited Item Detection with Significant Performance Gains.” Sensors, vol. 24, no. 1, 2024, article 102.

[6] Liang, Tian, et al. “A Study on Detection of Prohibited Items Based on X-Ray Images with Lightweight Model.” Sensors, vol. 25, no. 17, 2025, article 5462.

[7] Wang, Aimin, et al. “Improved YOLOv8 for Dangerous Goods Detection in X-ray Security Images.” Electronics, vol. 13, no. 16, 2024, article 3238.

[8] Transportation Security Administration. “TSA Intercepts 6678 Firearms at Airport Security Checkpoints in 2024.” 15 Jan. 2025.

[9] Transportation Security Administration. “2024 TSA Checkpoint Travel Numbers.”

[10] U.S. Department of Homeland Security. “AI-Enabled Paradigms for Non-Intrusive Screening.” 27 June 2024.

Leave a comment