Section 1: The Problem

In 2022, the National Vulnerability Database recorded 25,064 new vulnerabilities, up from 17,305 in 2019. (Ahmadi Mehri) That pace turns patching into a daily triage problem, not a weekly checklist. (Ahmadi Mehri)

Deadlines tighten while complexity rises. US federal guidance cited in an IEEE patch-management study sets 15 days for critical vulnerabilities and 30 days for high-severity ones, and UK guidance cited there uses 14 days for critical vulnerabilities. (Ahmadi Mehri) Those timelines collide with real operational friction: testing, downtime risk, dependencies, and limited expert bandwidth. (Ahmadi Mehri)

Traditional vulnerability management leans on CVSS severity. (Jacobs) Severity tells you impact if exploited, not how likely exploitation is next month. (FIRST) That mismatch creates a predictable failure mode: teams burn effort patching “high” items that never get used, while attackers focus on a smaller set with active exploit momentum. (Jacobs)

Section 2: What Research Shows

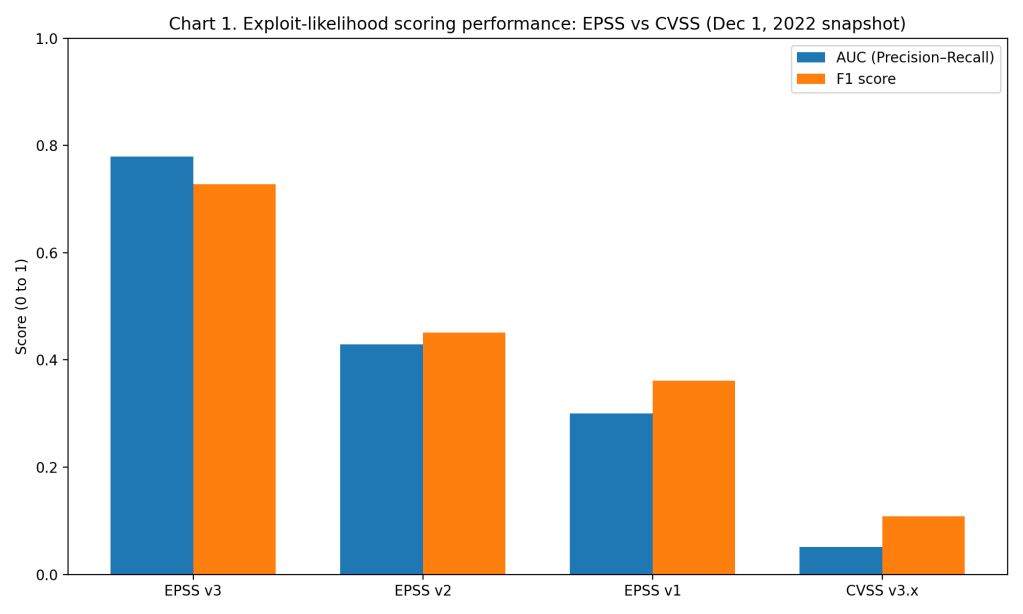

Exploit-likelihood scoring beats severity scoring in retrospective validation. In a Dec. 1, 2022 snapshot study, EPSS v3 achieved a precision–recall AUC of 0.7795 and an F1 score of 0.728. (Jacobs) In the same evaluation, CVSS v3.x base score produced an AUC of 0.051 and an F1 score of 0.108. (Jacobs)

The efficiency gap is the practical headline. At the EPSS v3 F1 threshold, patching only vulnerabilities with EPSS probability at or above 0.36 delivered 78.5% efficiency and 67.8% coverage, while prioritizing 3.5% of published vulnerabilities. (Jacobs) At the CVSS v3.x F1 threshold, CVSS prioritized 13.7% of vulnerabilities yet delivered 6.5% efficiency and 32.3% coverage. (Jacobs)

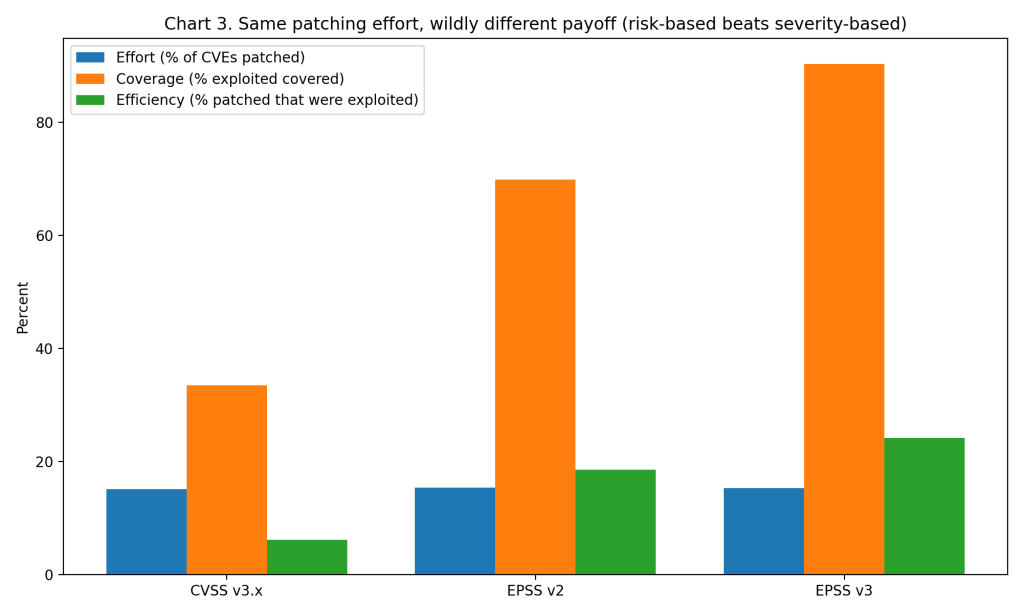

Hold effort constant and the advantage stays. With roughly 15% of CVEs remediated, a CVSS strategy delivered 33.5% coverage and 6.1% efficiency, while an EPSS v3 strategy delivered 90.4% coverage and 24.1% efficiency. (Jacobs) That is the same patching workload producing a radically different reduction in real exploitation exposure. (Jacobs)

Section 3: What the Real World Shows

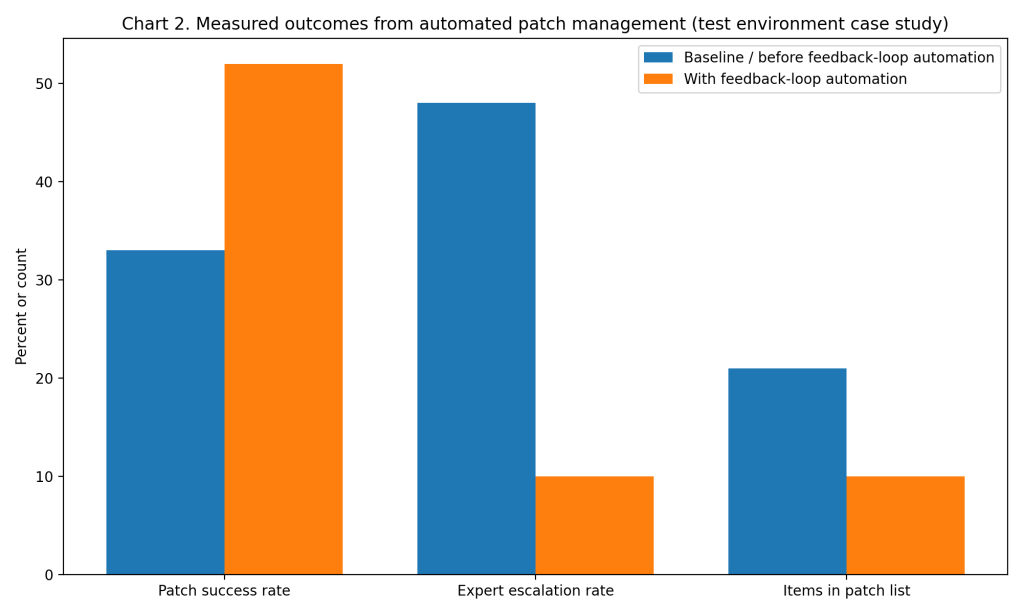

One reason risk-based scoring still fails in practice is that patching is not only prioritization, it is execution. A refereed IEEE conference paper implemented an automated patch management procedure inside a test environment and measured outcomes across patching “case studies.” (Ahmadi Mehri)

The intervention was a feedback-loop design: the system learned from patch failures, checked dependencies, and adjusted the priority list before deployment. (Ahmadi Mehri) In the baseline case, the paper reports patch success of 33% without the feedback loop, rising to 52% with the feedback loop condition. (Ahmadi Mehri)

Operational workload improved too. Expert escalation fell from 48% of patches to 10% in the improved case. (Ahmadi Mehri) The prioritization list also shrank from 21 CVEs to 10 after automated review removed unnecessary items and adjusted ordering based on dependencies. (Ahmadi Mehri)

A recent systematic review of AI-powered vulnerability detection and patch management synthesized 29 peer-reviewed studies from 2019–2024 and highlights a consistent pattern: models report strong performance, but deployment struggles with generalizability, lack of standard benchmarks, limited adversarial testing, and trust in black-box outputs. (Malkawi and Alhajj)

Section 4: The Implementation Gap

The first barrier is incentives and compliance structure. Many programs patch to satisfy SLA-style deadlines tied to severity categories, which pushes CVSS-first workflows even when teams know CVSS is not a threat likelihood signal. (Ahmadi Mehri; Jacobs)

The second barrier is false confidence from a single score. EPSS is explicitly a probability of exploitation activity in a near-term window, not an impact score, so teams must combine it with asset criticality, exposure, and business constraints. (FIRST; Orange Cyberdefense) That extra fusion step demands better asset inventory and ownership mapping than many organizations maintain. (Malkawi and Alhajj)

The third barrier is patch execution risk. The IEEE study emphasizes that patch verification and side effects require deep understanding of system architecture, and vendor patches can introduce instability or new issues. (Ahmadi Mehri) Downtime fear and dependency complexity push administrators toward conservative, slower rollouts, even when exploitation risk is high. (Ahmadi Mehri)

The fourth barrier is workflow integration. Risk-based prioritization only helps if the output turns into tickets, maintenance windows, testing plans, rollback steps, and verified deployment results, at scale. (Ahmadi Mehri) The systematic review flags practical deployment constraints such as robustness and explainability as key reasons high reported performance fails to translate to production. (Malkawi and Alhajj)

The fifth barrier is measurement. Teams often track “patches applied” or “critical CVEs closed,” which rewards volume over risk reduction. (Jacobs) The EPSS analysis shows you can remediate more exploited vulnerabilities with less effort, but only if metrics align to coverage and efficiency, not raw counts. (Jacobs)

Section 5: Where It Actually Works

It works where prioritization and execution are treated as one system. The automated patch management case study improved success rate, reduced escalations, and cut the patch list by using dependency-aware review plus a feedback loop that learned from past failures. (Ahmadi Mehri)

It also works where teams accept probabilistic thinking. EPSS produces calibrated probabilities designed to support short-term exploitation forecasting, which is usable for resource allocation when combined with business impact and exposure context. (Jacobs; FIRST)

Section 6: The Opportunity

Risk-based patching can move from “better scoring” to “better outcomes” when organizations redesign the full loop: prioritize, execute, verify, learn, repeat. (Ahmadi Mehri; Jacobs)

Takeaways for higher adoption

- Replace CVSS-only SLAs with dual targets, risk likelihood plus business impact, so teams patch what attackers use first. (Jacobs; FIRST)

- Integrate EPSS into ticketing and change management so “high EPSS” automatically triggers owned actions, windows, and rollback plans. (Ahmadi Mehri; FIRST)

- Add dependency-aware automation and feedback loops to reduce patch failure, expert escalation, and cycle time. (Ahmadi Mehri)

- Track payoff metrics, coverage of exploited vulnerabilities and efficiency of patching effort, not only closure counts. (Jacobs)

- Require explainability and robustness checks for AI-driven tools, because trust and generalization block production rollout. (Malkawi and Alhajj)

Data visualizations (actual data used)

Chart 1, Accuracy comparison (EPSS vs CVSS, Dec. 1, 2022 snapshot)

Chart 2, Real-world outcome (Automated patch management case study, success and workload)

Chart 3, Implementation gap snapshot (Same effort, different payoff: CVSS vs EPSS)

References (MLA)

- Ahmadi Mehri, Vida, Patrik Arlos, and Emiliano Casalicchio. “Automated Patch Management: An Empirical Evaluation Study.” Proceedings of the 2023 IEEE International Conference on Cyber Security and Resilience (CSR), 2023, pp. 321–328.

- Jacobs, Jay, et al. “Enhancing Vulnerability Prioritization: Data-Driven Exploit Predictions with Community-Driven Insights.” WEIS 2023, 2023.

- FIRST. “Exploit Prediction Scoring System (EPSS).” FIRST.org, https://www.first.org/epss/.

- Orange Cyberdefense. “Exploring the Exploit Prediction Scoring System.” Orange Cyberdefense Research Blog, 14 June 2024.

- Malkawi, Malek, and Reda Alhajj. “AI-Powered Vulnerability Detection and Patch Management in Cybersecurity: A Systematic Review of Techniques, Challenges, and Emerging Trends.” Machine Learning and Knowledge Extraction, vol. 8, no. 1, 2026, article 19, doi:10.3390/make8010019.

Leave a comment