Section 1: The Problem

Phishing keeps driving record losses. In 2024, the FBI’s Internet Crime Complaint Center reported over 859,000 complaints and more than 16 billion dollars in reported losses across cyber-enabled crime categories (Federal Bureau of Investigation). Business email compromise and phishing-related schemes remain among the costliest categories.

Email works because trust is built into identity. Attackers spoof brands, executives, or vendors and rely on one click or one wire transfer. Verizon’s 2025 Data Breach Investigations Report continues to identify the human element and phishing as key breach pathways (Verizon).

Traditional defenses fall into two camps. First, filtering rules and blacklists. Second, annual awareness training and phishing simulations. Both help, but neither keeps pace with high-volume, well-written, AI-assisted phishing campaigns.

Section 2: What Research Shows

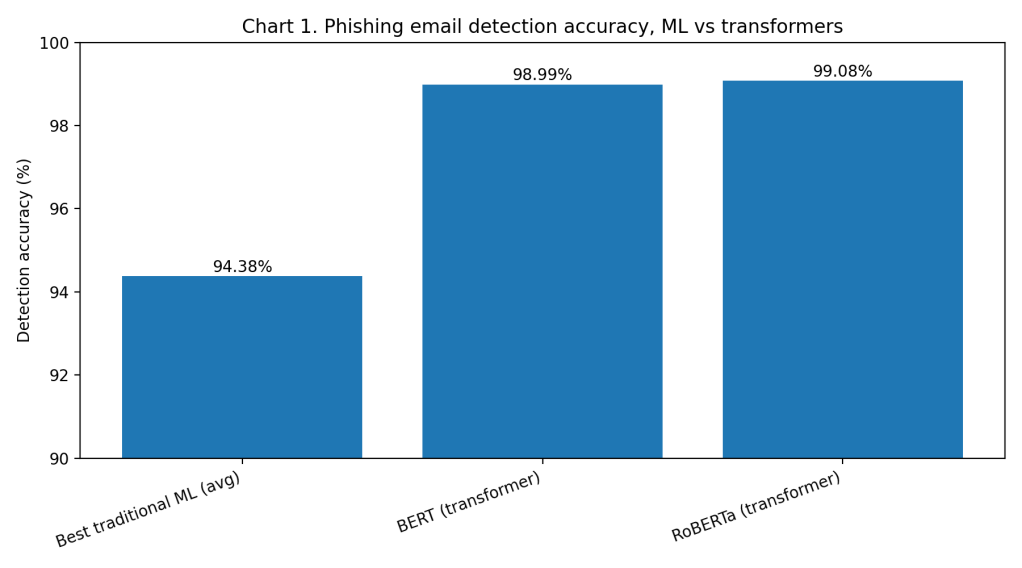

Detection performance in controlled research settings is striking. A 2025 Applied Sciences study evaluated 14 machine learning and deep learning models across 10 datasets. On a merged balanced dataset, RoBERTa achieved 99.08% accuracy and BERT reached 98.99%, outperforming traditional ML by an average margin of 4.7 percentage points (Alhuzali et al.).

That gap reflects generalization power. Transformer-based models capture semantic patterns and contextual cues that rule-based and feature-based classifiers miss. Even high-performing traditional ML approaches plateau lower on the same benchmarks (Alhuzali et al.).

Earlier blacklist-style approaches show inherent limits. One Applied Sciences evaluation reports false positives below 0.1% and false negatives below 8% for blacklist-based detection, while also noting that such systems struggle with zero-day attacks and delayed list updates (Kapan and Gunal).

A 2024 systematic review of deep learning methods for phishing detection emphasizes adaptability to new patterns as the central advantage of deep architectures over static or heuristic models (Kyaw et al.). Technically, the detection problem is largely solved at the classification layer.

Section 3: What the Real World Shows

The harder problem is behavior change. A large randomized field study at UCSD Health examined simulated phishing campaigns from January to October 2023, covering 19,789 full-time employees after data cleaning (Ho et al.).

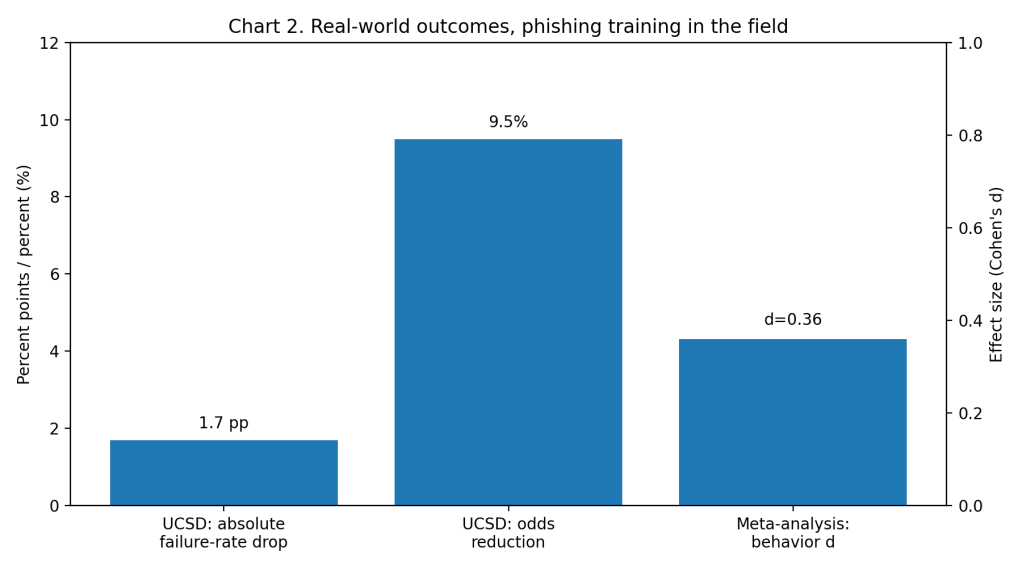

Embedded phishing training produced statistically significant improvements, but the magnitude was modest. The intervention groups had an odds ratio of 0.905 relative to control, meaning about 9.5% lower odds of failing, which translated into roughly a 1.7 percentage point absolute reduction in failure rates (Ho et al.).

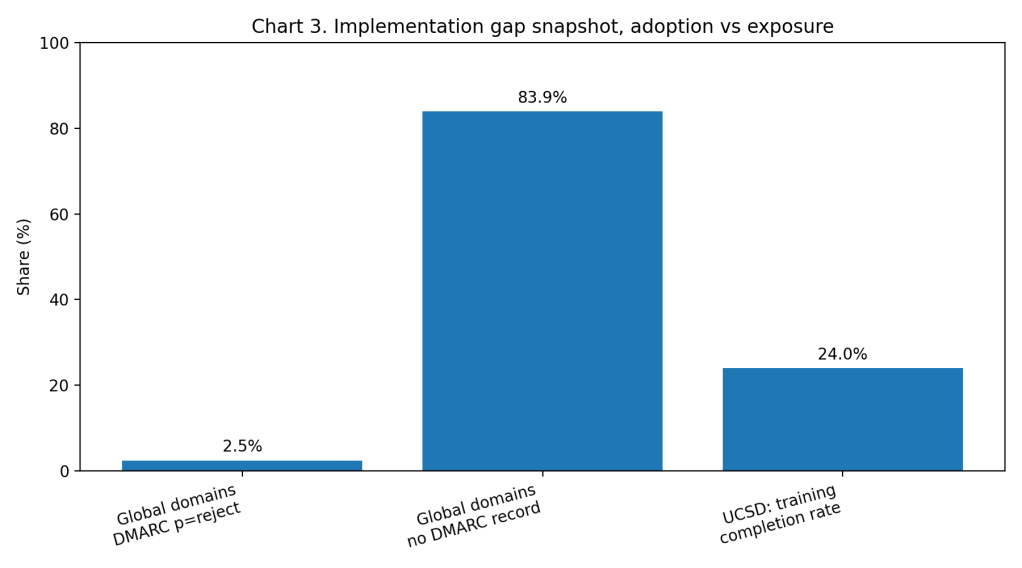

Engagement data explains part of the gap. Average completion of embedded training was 24%. Between 37% and 51% of training sessions showed zero seconds of engagement, and more than 75% of sessions lasted under one minute (Ho et al.). Many users clicked through or closed training immediately.

A 2025 meta-analysis on cybersecurity training reports a positive overall effect on end-user outcomes but finds smaller effect sizes when measuring actual behavior compared to knowledge or attitudes (Prümmer et al.). Training works, but behavior change is incremental and fragile.

Section 4: The Implementation Gap

The first barrier is operational risk tolerance. A model that achieves 99% accuracy in a paper still produces errors at scale. In large organizations processing millions of emails per day, even a 0.1% false positive rate can block thousands of legitimate messages. Security teams must balance precision against business continuity (Alhuzali et al.).

The second barrier is authentication adoption. DMARC, which enforces domain-based message authentication and can reject spoofed mail, remains under-enforced globally. A 2025 Red Sift analysis reports only 2.5% of domains enforce DMARC at p=reject, while 83.9% have no visible DMARC record (Red Sift). That means most domains do not actively reject impersonation attempts.

The third barrier is engagement fatigue. The UCSD Health field study shows low training completion and minimal interaction time (Ho et al.). Without sustained attention and reinforcement, knowledge does not translate into durable defensive habits.

The fourth barrier is organizational maturity. CISA’s 2025 Cybersecurity Performance Goals Adoption Report describes measurable improvements when structured practices are adopted and tracked, but it also highlights uneven adoption and resource constraints across organizations (Cybersecurity and Infrastructure Security Agency). Implementation requires monitoring, tuning, and governance, not just model deployment.

Section 5: Where It Actually Works

It works when organizations treat phishing defense as a system. UCSD Health combined Office 365 protections, commercial anti-phishing platforms, and structured repeated simulations, then measured outcomes with randomized cohorts (Ho et al.). That design supports iteration and accountability.

It also works when authentication policies reach enforcement. Domains that implement DMARC at p=reject and monitor alignment close the spoofing pathway rather than merely observing it (Red Sift). Strong policy plus continuous monitoring turns authentication from theory into protection.

Section 6: The Opportunity

Phishing detection technology has matured. The remaining gap lies in enforcement, integration, and behavioral engineering.

Actionable opportunities

• Enforce DMARC at p=reject and monitor reports until alignment errors are resolved (Red Sift).

• Measure phishing resilience with operational metrics such as click rate, report rate, and time-to-containment, not training completion alone (Ho et al.).

• Deploy high-accuracy transformer-based detection models with staged rollout and strict false-positive thresholds (Alhuzali et al.).

• Replace annual training with short, repeated, context-specific interventions validated through field measurement (Ho et al.; Prümmer et al.).

• Align security controls with CISA performance goals and track adoption maturity over time (Cybersecurity and Infrastructure Security Agency).

Data Visualizations

Chart 1, Accuracy comparison (phishing email detection benchmarks)

Chart 2, Real-world outcome (UCSD Health field study + behavior effect size)

Chart 3, Implementation gap snapshot (DMARC enforcement + training engagement)

Works Cited

Alhuzali, Abeer, et al. “In-Depth Analysis of Phishing Email Detection: Evaluating the Performance of Machine Learning and Deep Learning Models Across Multiple Datasets.” Applied Sciences, 2025.

Cybersecurity and Infrastructure Security Agency. Cybersecurity Performance Goals Adoption Report. Jan. 2025.

Federal Bureau of Investigation. “FBI Releases Annual Internet Crime Report.” 23 Apr. 2025.

Ho, Grant, et al. “Understanding the Efficacy of Phishing Training in Practice.” Field study, UCSD Health phishing campaigns, Jan.–Oct. 2023.

Kapan, Sibel, and Efnan Sora Gunal. “Improved Phishing Attack Detection with Machine Learning: A Comprehensive Evaluation of Classifiers and Features.” Applied Sciences, 2023.

Kyaw, P. H., et al. “A Systematic Review of Deep Learning Techniques for Phishing Email Detection.” Electronics, 2024.

Prümmer, J., et al. “Assessing the Effect of Cybersecurity Training on End-Users.” Computers & Security, 2025.

Red Sift. “Red Sift’s Guide to Global DMARC Adoption.” 19 Mar. 2025.

Verizon. 2025 Data Breach Investigations Report. 2025.

Leave a comment