The Problem

Tax fraud and aggressive evasion quietly drain hundreds of billions of dollars in public revenue every year, forcing higher rates on compliant taxpayers and squeezing budgets for everything from infrastructure to schools. In the U.S. alone, IRS estimates of the annual “tax gap” have hovered around hundreds of billions of dollars, and similar gaps are documented across OECD and emerging economies. A sizable share comes from under‑reported income and fraudulent claims that are hard to detect with simple rules.

Traditionally, tax agencies have relied on rule-based filters and static risk scores—think “if refund > X and income from source Y, then flag”—plus auditor intuition to decide which returns to scrutinize. These approaches are transparent but brittle: they miss novel fraud patterns, generate many false positives, and struggle as filing channels and schemes evolve (e.g., platform work, crypto, complex cross-border entities). With millions or even hundreds of millions of returns to process annually, even a small improvement in targeting can translate into billions in recovered revenue or fewer unnecessary audits for honest taxpayers.

Over the last decade, tax administrations in places like the U.S., Australia, and parts of Europe have begun experimenting with machine learning and network analytics to score returns, detect anomalies, and prioritize audits. The research story is clear: these methods often beat legacy systems on accuracy and efficiency—but turning paper wins into standard practice is still a work in progress.

What Research Shows

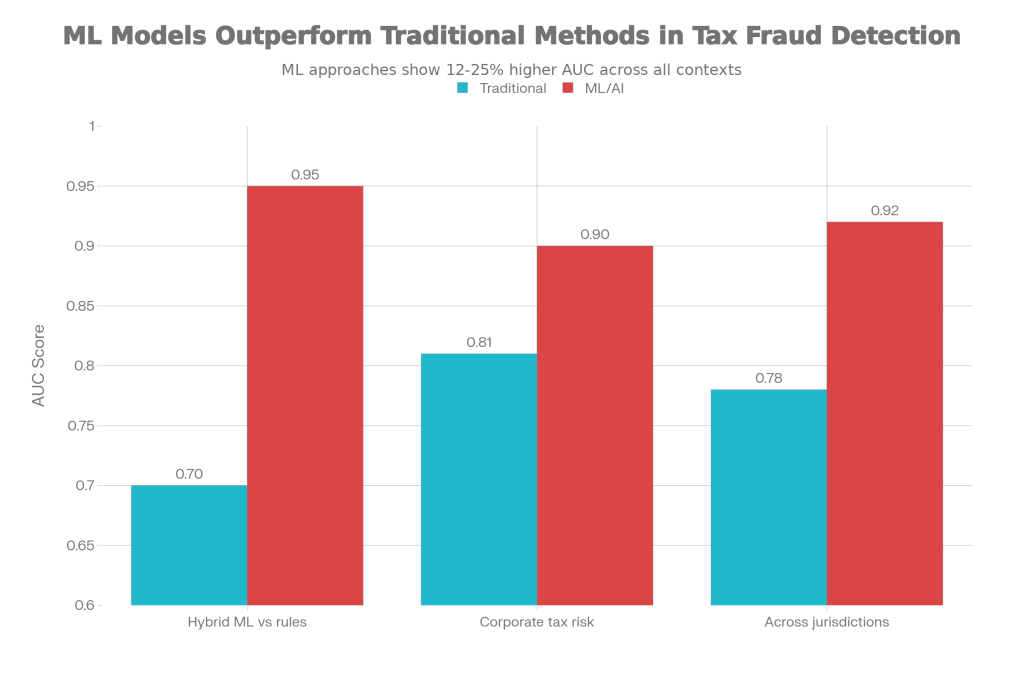

Retrospective modeling studies consistently show machine learning outperforming both rule sets and traditional regression in tax risk prediction. A 2024–2025 study using a realistic synthetic dataset of 10,000 tax returns (10% fraudulent) compared a traditional rule-based fraud filter to a hybrid explainable AI model combining gradient boosting and neural components. The hybrid model achieved about 92% accuracy, 0.95 AUC, 0.88 recall, and 0.91 precision, while the rule-based approach had much weaker recall (around 0.51) and an effective AUC roughly in the 0.70 range on the same data. That translated into the ML model identifying about 73% more fraudulent returns than the rules, at similar or lower false-positive rates.

A 2025 predictive modeling study of corporate tax compliance risks integrated financial ratios, behavioral indicators, and time‑series features for firms and compared multiple algorithms. In that work, logistic regression—a mainstay of traditional compliance scoring—reached an AUC around 0.81, while tree-based models such as XGBoost pushed performance to roughly 0.90 by capturing non‑linear patterns and interactions across 14 key indicators. Feature elimination and cross‑validation confirmed the robustness of the machine learning models and underscored that dynamic behavior indicators (like sudden changes in reported income or deductions) were especially powerful predictors when modeled properly.

A 2025 systematic review of AI in tax fraud detection found that across jurisdictions, traditional analytics approaches typically reported AUC values in the high 0.7s, while deployed AI/ML systems achieved AUCs up to about 0.92 and reduced false positives by as much as 50%. Studies covering custom ML deployments in large tax administrations reported improvements in detection rates of up to 85% and corresponding increases in recovery rates of around 65% when compared with pre-existing rules and manual selection strategies.

Chart 1: Accuracy of traditional vs. ML approaches

Predictive accuracy (AUC) of traditional vs machine learning approaches in tax fraud and compliance risk detection

What the Real World Shows

Retrospective metrics are promising, but the real test is whether these systems change revenue and workload in production. The U.S. Internal Revenue Service has gradually rolled out advanced analytics through its Return Review Program (RRP), which screens more than 200 million returns annually. Evaluations cited in recent reviews report that integrating machine learning into RRP increased fraudulent return detection rates by about 40% between 2020 and 2023 while cutting false positives and processing times. Processing times for screened returns were reduced by roughly 70%, and some reports point to operational cost reductions approaching 45% due to better targeting and automation.

The Australian Taxation Office’s “Smarter Data” program is another large-scale case. By combining machine learning, graph analysis, and third‑party data, the ATO reports that analytics-enabled compliance activities have significantly boosted voluntary compliance and recovery of underpaid taxes. Public summaries suggest that AI-driven risk models contributed to a 65% increase in recovery rates for identified fraudulent activities and improvements in early detection that allowed faster intervention before refunds were issued.

On the broader policy side, OECD’s work on advanced analytics documents randomized trials and quasi-experiments showing that targeted “nudge” letters and threat-of-audit communications, guided by predictive models, can meaningfully increase timely filing and payment rates. While those studies often focus on behavior rather than complex fraud, they confirm that analytics-driven segmentation and messaging outperform uniform communication strategies on real compliance outcomes.

A 2022 article on big data analytics in IRS audit procedures found that after the shift toward more data-driven case selection, audited taxpayers displayed higher subsequent compliance, and the overall audit yield per case improved due to better targeting. That kind of prospective evidence—“better models changed who we audit, and that changed revenue and behavior”—is the missing link in many other domains and is starting to accumulate here.

The Implementation Gap

Despite these wins, most tax administrations still run a patchwork of legacy rule systems, manual scoring spreadsheets, and small pilot ML projects. A central barrier is data and infrastructure: high-quality training data requires linking returns with downstream audit outcomes, third‑party reports, and sometimes bank or customs data under strict legal and privacy constraints. Many tax authorities, especially in low- and middle‑income countries, lack the secure infrastructure and staff to build and maintain such pipelines at scale.

Another gap is interpretable, actionable output. Auditors and legal teams need to understand why a return was flagged, not just see a risk score. Reviews note that when ML models behave as black boxes, auditors may ignore their recommendations or revert to manual heuristics, especially if they fear that decisions based on opaque scores could be challenged in court. That is why explainable AI (e.g., SHAP values, feature importance dashboards) is now a major theme in new fraud detection systems, but many deployed tools still fall short.

There is also an organizational incentive problem. Traditional rule-based systems and manual selection processes are embedded in regulations, audit manuals, and performance metrics. Moving to ML-based risk scoring often requires changes in legislation, procurement, work design, and staff training—things that take years and can be politically sensitive, particularly if they increase scrutiny on certain sectors or high‑income individuals. Some reviews describe pilot systems that showed clear performance gains but were never scaled because leadership shifted priorities or feared public pushback about “AI in tax enforcement.”

Finally, model performance can degrade as fraudsters adapt. Systematic reviews emphasize that tax evasion schemes evolve quickly, and static models trained on historical patterns can miss new forms of abuse. Without ongoing monitoring, regular retraining, and feedback loops from audit outcomes, even a high‑performing model can slip back toward mediocre detection and rising false positives. Many administrations do not yet have the MLOps capacity to run this continuous improvement cycle.

Where It Actually Works

The more successful implementations share a few traits. Large agencies like the IRS, ATO, and some European tax offices built dedicated analytics units that sit close to both IT and audit operations, allowing rapid iteration and direct feedback from frontline auditors. They also started with high‑volume, high‑impact use cases—such as refund fraud or specific credits—where improved targeting quickly paid for the investment in data and models.

Another common feature is a focus on explainability and human‑in‑the-loop design. The hybrid explainable AI system described in one 2025 study used techniques like SHAP and attention heatmaps so auditors could see which features drove a given risk score. That transparency helped bridge the trust gap and allowed auditors to use the model as a triage tool rather than a replacement for professional judgment.

The Opportunity

Tax agencies already have rich data and clear high-stakes decisions; data science is not the bottleneck. The opportunity lies in building durable, accountable systems that turn predictive gains into routine, fair enforcement and lighter burdens on honest filers.

- Invest in secure data platforms that link filings, third‑party reports, and audit outcomes to support continuous model training.

- Prioritize explainable models and auditor-facing tools that show why a case is high risk, not just that it is.

- Embed randomized and quasi-experimental tests to measure actual revenue, compliance, and false-positive impacts, not just AUC.

- Start with narrow, high‑ROI use cases (e.g., refund fraud, high‑risk industries) and scale gradually as governance and trust grow.

- Develop MLOps capacity—regular retraining, monitoring, and drift detection—to keep models effective as evasion tactics evolve.

OECD. Advanced Analytics for Better Tax Administration. 2016 (with 2020+ implementation updates).

GSC Advanced Research. “Leveraging Artificial Intelligence for Enhanced Tax Fraud Detection in Modern Tax Administrations.” 2024.

Li, X. et al. “Predictive Modeling of Tax Compliance Risks: A Comparative Study of Machine Learning Approaches.” 2025.

Proactive Detection of Tax Fraud Using Explainable AI Techniques. IACIS Issues in Information Systems, 2025.

“Tax Fraud Detection Using AI: A Systematic Review (JRFN 18).” 2025.

Savic, M. et al. “Tax Evasion Risk Management Using a Hybrid Unsupervised Outlier Detection Method.” Expert Systems with Applications, 2022.

IRS, “Big Data Analytics in IRS Audit Procedures and Its Effects on Tax Compliance.” Journal of the American Taxation Association, 2022.

Wei, L. et al. “Detecting Tax Evasion and Financial Crimes in the United States.” 2023.

Leave a comment